Hello everyone,

My name is Nisarg Wath. I’m interested in contributing to RTEMS for GSoC 2026.

I’ve started exploring the “Cobra Static Analyzer and RTEMS” project idea. So far I have set up the RTEMS development environment, built the RTEMS toolchain (sparc-rtems7), and installed Cobra (v5.3) on macOS.

As an initial experiment, I ran Cobra on the RTEMS source code, starting with the cpukit/score directory. Cobra was able to parse about 8776 files (~17.8M tokens) from the RTEMS tree. I also tried running the MISRA rule set to see what kind of results Cobra produces.

If anyone has suggestions on which RTEMS modules would be good to analyse first, or any advice on using Cobra with RTEMS, I’d appreciate it.

Thanks!

Nisarg

Probably cpukit/rtems/src is a manageable place to start. The score is good and eventually should be supported, but it is also quite complex. You should start somewhere simpler to work through the flow of the tools.

The main issue with these static analysis tools is how to make using them seamless and able to integrate with the RTEMS developer ecosystem.

Thanks for the suggestion! I’ll start experimenting with cpukit/rtems/src and see what kind of results Cobra produces there. I’ll also try to understand how the analysis workflow could be automated so it integrates better with the RTEMS development process.

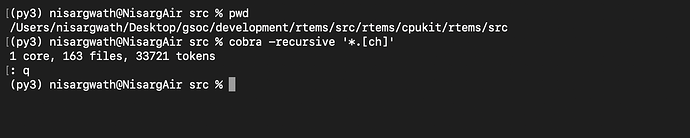

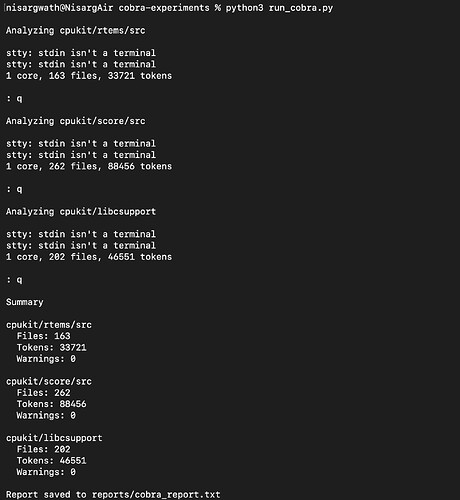

Hi, Following the suggestion to start with a simpler module, I ran Cobra on cpukit/rtems/src first.

Running Cobra inside that directory:

cobra -recursive '*.[ch]'

Cobra processed about 163 files (~33k tokens).

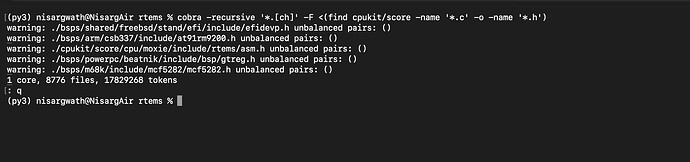

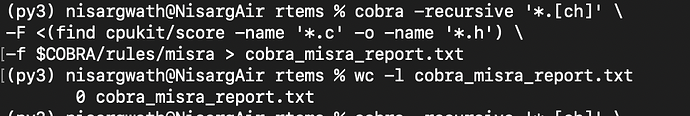

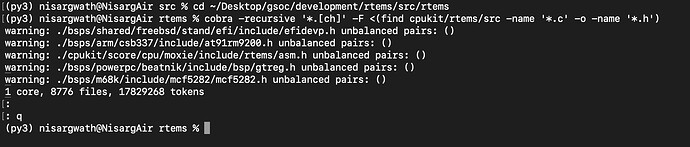

Then I ran Cobra from the RTEMS root directory using a file list for that module:

cobra -recursive '*.[ch]' -F <(find cpukit/rtems/src -name '*.c' -o -name '*.h')

During the scan Cobra reported a few warnings like “unbalanced pairs: ()”, mostly in BSP or architecture-specific header files.

Next I’ll try running the same analysis on cpukit/score/src and start looking into how the warnings could be filtered or summarized.

Thanks.

Hi,

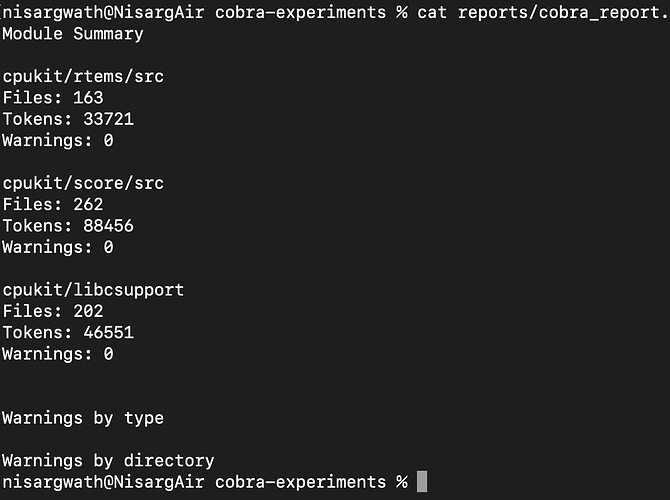

I did a bit more experimenting with Cobra and wrote a small script to automate running the analysis on a few RTEMS modules.

From the initial runs I got something like:

| Module |

Files |

Tokens |

| cpukit/rtems/src |

163 |

33721 |

| cpukit/score/src |

262 |

88456 |

| cpukit/libcsupport |

202 |

46551 |

It looks like cpukit/score/src is noticeably larger than cpukit/rtems/src, so static analysis there might be a bit more complex.

Next I’m planning to extend the script to summarize warnings and try filtering directories that may generate a lot of noise (for example BSP headers).

Would it make sense to focus the analysis mainly on cpukit/score and cpukit/rtems first and treat BSP directories separately?

Yes, we would prefer to begin within the cpukit.

Thanks for the clarification. I’ll focus the experiments within cpukit and continue exploring the modules there.

I ran Cobra across the cpukit modules (score, rtems, and libcsupport). These processed several hundred files but produced no warnings. Earlier runs showed some warnings in BSP headers, so I’m planning to compare cpukit and BSP directories to see where most static analysis noise originates.

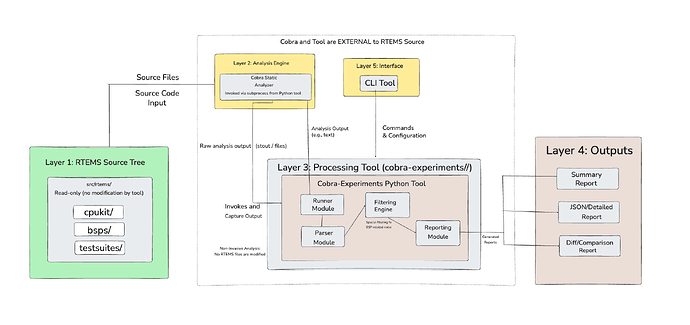

Hi sharing my proposed Cobra integration design (external tool + filtering for BSP noise) based on experiments would appreciate feedback on workflow fit and filtering approach.