Thank you for the resources! I would like to share progress on setting up CAN communication on the BeagleBone Black (BBB) using Linux. I have successfully completed both the hardware setup and initial communication tests using the onboard DCAN controllers.

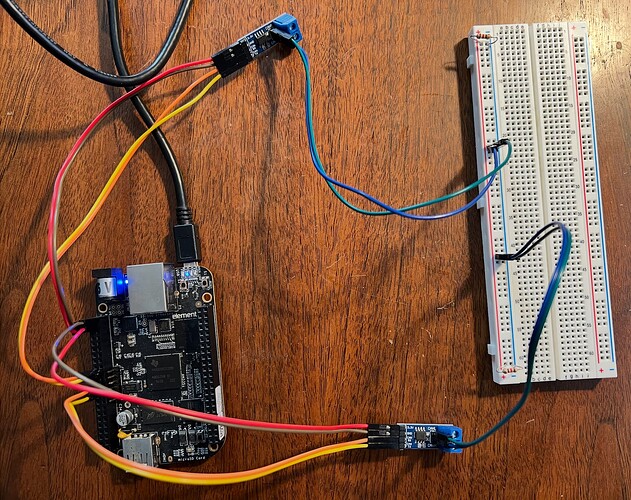

- Hardware Setup

I configured a minimal CAN network on a single BBB using both DCAN interfaces.

DCAN1 (P9.24 / P9.26) is connected to one CAN transceiver.

DCAN0 (P9.19 / P9.20) is connected to another CAN transceiver.

The CANH and CANL lines from both transceivers are connected together to form a shared CAN bus.

Two 120 ohm termination resistors are placed across CANH and CANL.

This setup allows the BBB to simulate two CAN nodes on the same physical bus.

- Linux Configuration

I enabled CAN functionality using pin multiplexing:

config-pin p9.24 can

config-pin p9.26 can

config-pin p9.19 can

config-pin p9.20 can

Both CAN interfaces were brought up using the same bitrate:

sudo ip link set can0 up type can bitrate 125000

sudo ip link set can1 up type can bitrate 125000

I verified that both interfaces are active using:

ip link show

The output shows that both can0 and can1 are in the UP state.

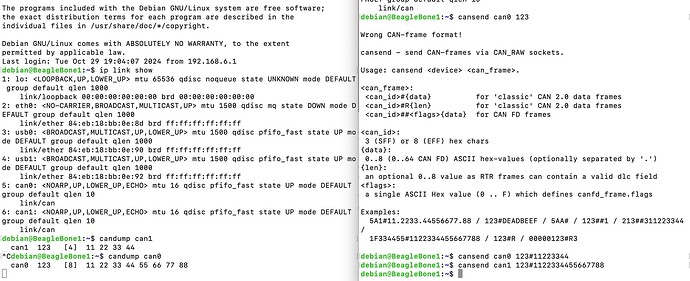

- CAN Communication Test

I performed bidirectional communication tests between can0 and can1.

Test 1: can0 to can1

In terminal 1:

candump can1

In terminal 2:

cansend can0 123#11223344

The message was successfully received on can1.

Test 2: can1 to can0

In terminal 1:

candump can0

In terminal 2:

cansend can1 123#1122334455667788

The message was successfully received on can0.

- Screenshots

I have attached screenshots showing:

CAN interface status (ip link show)

Successful transmission and reception using candump

Hardware wiring on the breadboard

- Key Observations

Both DCAN controllers can operate simultaneously on a single BBB.

The SocketCAN framework and c_can driver function correctly.

Matching bitrate across nodes is required for communication. Lower bitrate generally provides more stable CAN communication, especially during initial testing and debugging. So I choose 125kbps rather than 500kbps.

Physical layer setup, including transceivers and termination resistor, is essential for stable operation.

- Next Steps

Next, I plan to study the Linux c_can driver in detail, including mailbox management, interrupt handling, and transmit/receive paths.

Also study the CAN stack of RTEMS. Think how to add driver on BBB.

Please let me know if there are any suggestions or additional tests I should perform.